Valve use in Data Centers

This blog post was written entirely by humans.

Until recently, most data centers relied on air cooling to keep processing chips from overheating. Fans blow cooled air over the servers, cooling them by convection. As late as 2014 the power density of the average server rack was 4- 5kW, and air-cooling systems were able to provide sufficient cooling.

With the advent of AI chips, however, came ever-increasing power densities. By 2024 the average power density had increased to 12kW/rack, but this number included all server farms. At the upper end AI chips like Nvidia's A100 – the first chip in widespread AI use- had a power density of >60kW/rack.

This trend shows no signs of slowing: power density on Nvidia's current Blackwell GB200 chips is 120kW/rack, and Nvidia's new Vera Rubin NVL and Rubin Ultra NVL 576 continue the upward power density march.

At the very top of the heap is the upcoming Khyber rack which is expected to have a power density of ~600kW. Right now this is beyond the cooling capabilities of most data centers (more on that in a moment.), so the Khyber will require a rack-sized side car to handle cooling. This would be like a "mini data center within a data center", where the cool data center air is used to cool the coolant.

As ponderous as this arrangement is, the trend to higher power densities continues even further: Nvidia expects power density to exceed 1MW/stack before 2030.

At these power densities cooling becomes such a formidable obstacle that it is a driving factor (along with constantly available solar power) in the recent SpaceX – xAI partnership to build datacenters in space.

Short of celestial data centers, how do we tackle the cooling problem here on earth? Four main designs (or combinations thereof) are in varying degrees of use:

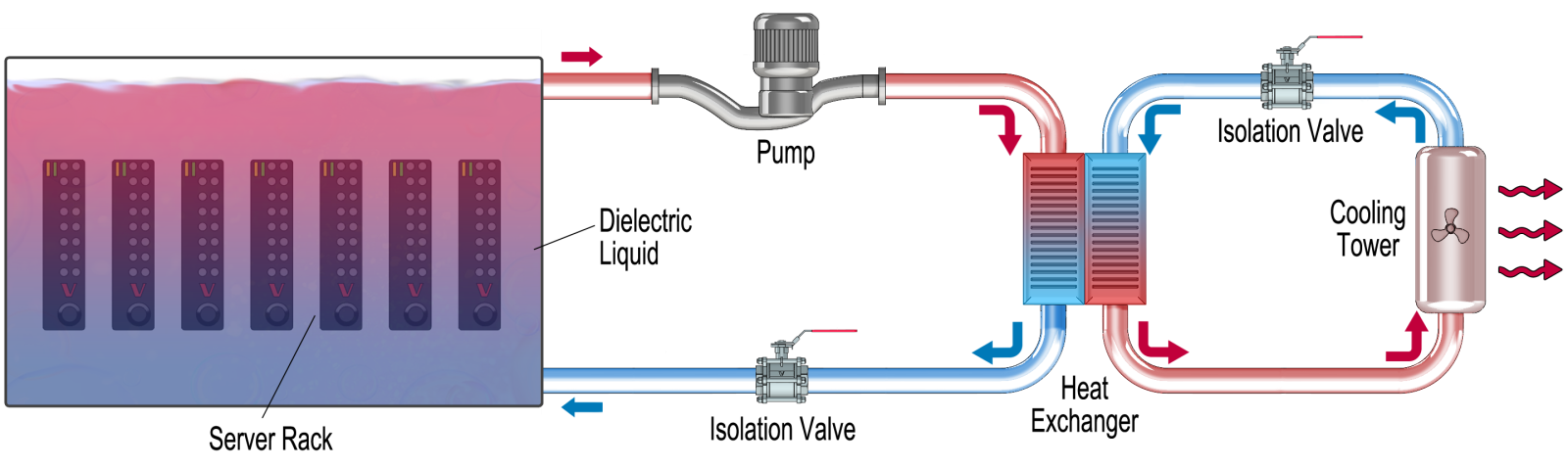

1. Single-Phase Immersion

Fig. 1 – Single-Phase Immersion Cooling

Single-Phase Immersion cooling involves submerging the entire server rack in chilled, dielectric fluid. Heat transfer happens directly from the chip to the fluid, and the warmed fluid is pumped to an external heat exchanger which rejects the heat to the outdoors. The chilled fluid is then pumped back to the server tank and the cycle is repeated. (Fig. 1)

Immersion technology, while an elegant theoretical solution, has not gained widespread adoption as the heat transfer is not much greater than other technologies (i.e. cold plates) but maintenance and repair are greatly complicated: any repair or replacement involves draining the tank which necessarily shuts down the entire rack of servers even if only one server requires attention. Thus, immersion cooling is not yet the primary choice in liquid cooling.

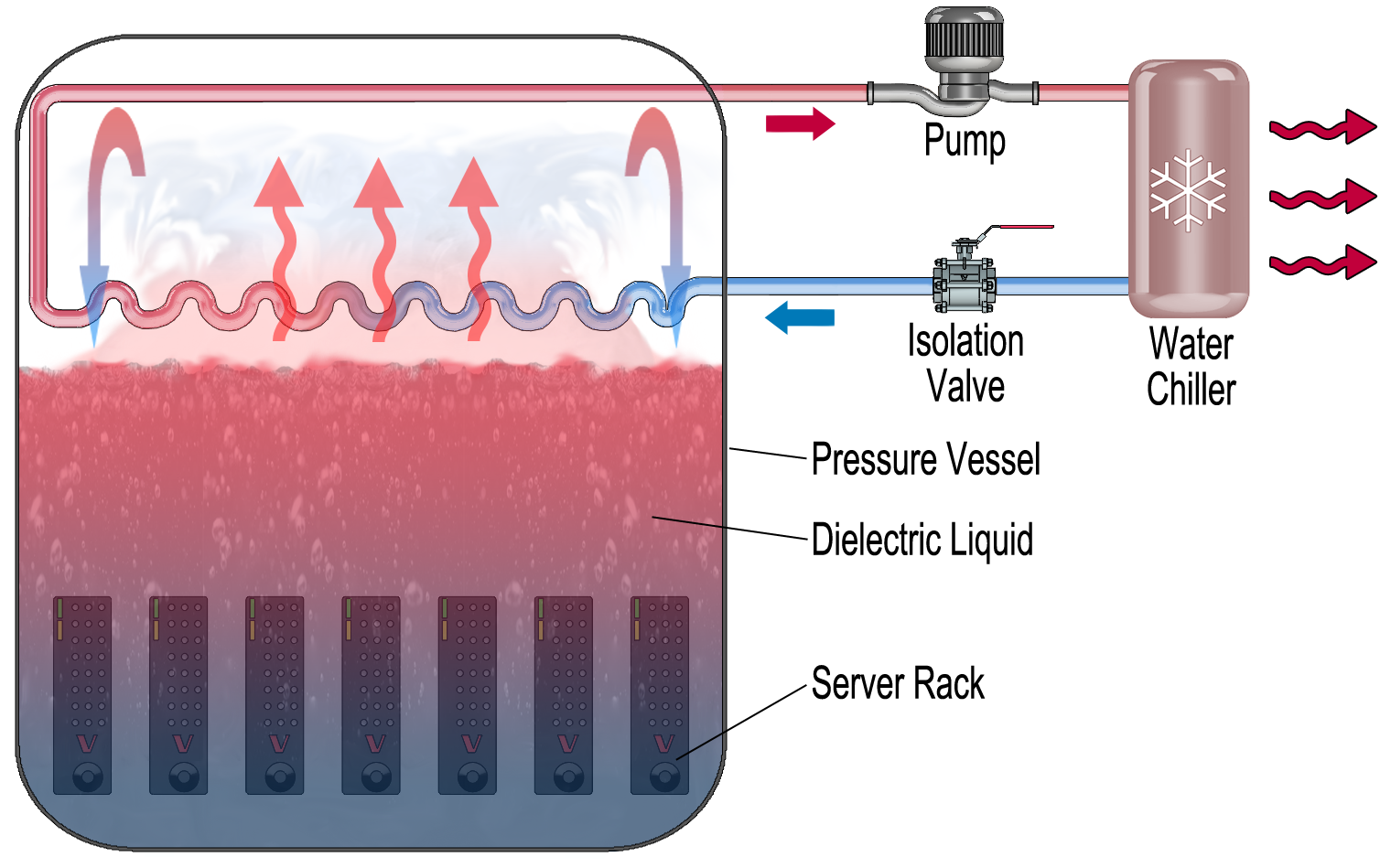

2. Two-Phase Immersion

Fig. 2 – Two-Phase Immersion Cooling

Two phase cooling uses immersion technology plus a phase change. The server racks are immersed in a pressure tank partially filled with dielectric fluid. The dielectric absorbs so much heat that it undergoes a phase change from liquid to vapor; ie, it boils. Heat is removed from the vapor via chilled water-glycol coils (or other refrigerants). The cooled dielectric vapor condenses and the process repeats (Fig 2).

The key advantage lies with the phase change, which absorbs tremendous amounts of heat energy. For example, steam at 100°C has six times the energy of water at 100°C. The energy required to change phase is called "latent heat" and is far greater than simply warming a refrigerant without the phase change (i.e. single-phase immersion cooling discussed previously) which is called "sensible heat."

Two phase systems offer the potential for much greater cooling capability than single phase but with significant added complexity. Two phase systems must handle both liquid and vapor; refrigerants are specially engineered dielectrics as opposed to simple water/glycol mixes; and boiling needs to be tightly controlled to prevent pressure spikes. Also, two phase cooling has the same maintenance and repair drawbacks as single-phase cooling.

For these reasons, two-phase cooling has not been widely deployed yet, either.

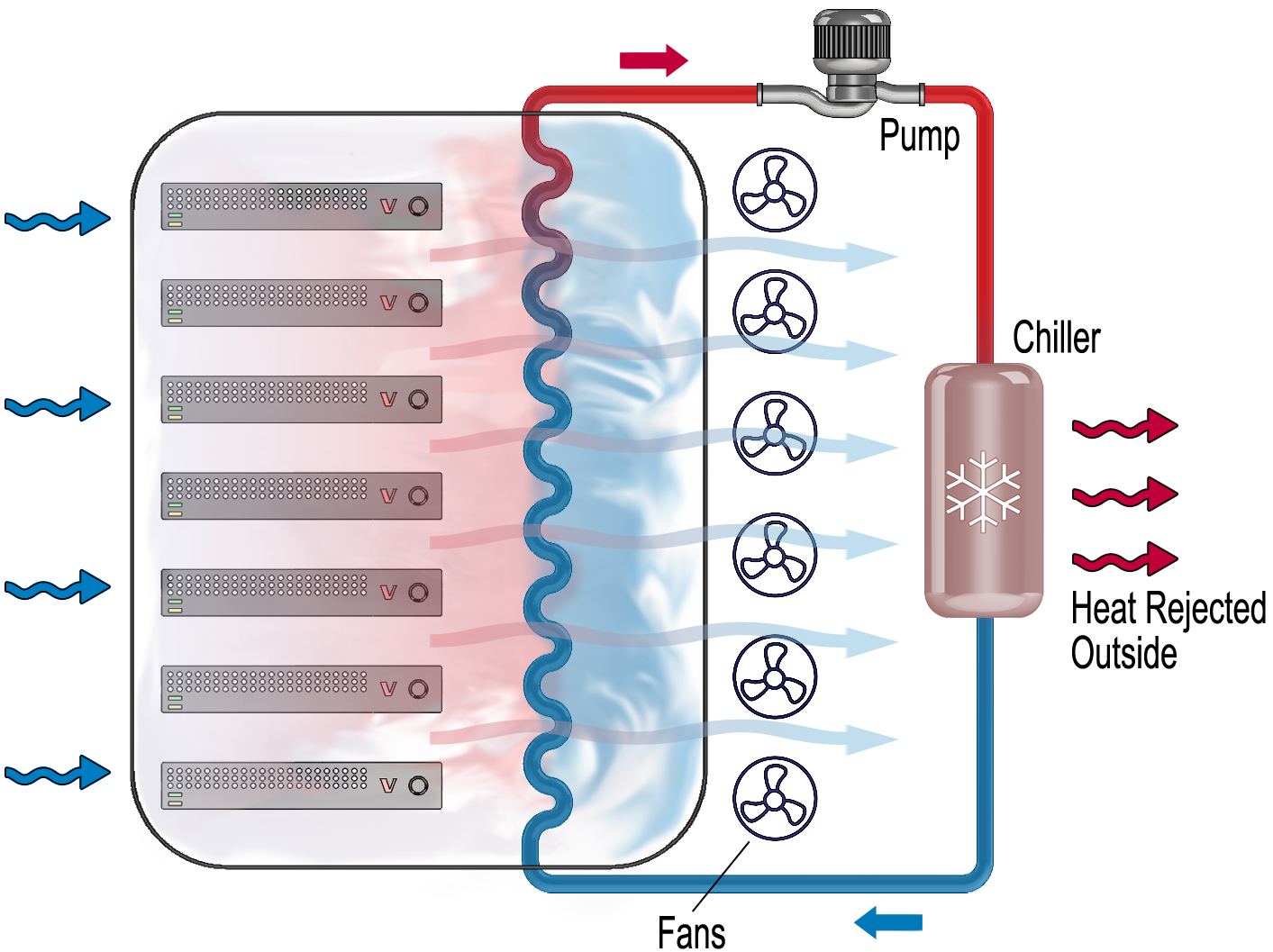

3. Rear Door

Fig. 3 – Rear Door Cooling

Rear door cooling is a supplemental cooling process where cool air within the data center is drawn in over the server rack via fans at the rear of the rack. The warm exhaust air passes over an air-to-liquid heat exchanger, and the warmed liquid is piped back to a supplemental chiller where the heat is removed and the process begins again (Fig. 3).

Rear door cooling helps reduce the load on the overall HVAC system.

The heat exchange occurs closer to the chip thereby increasing cooling capability, but since rear door cooling relies on convection (vs conducting heat away), its application in ever-increasing power densities is limited.

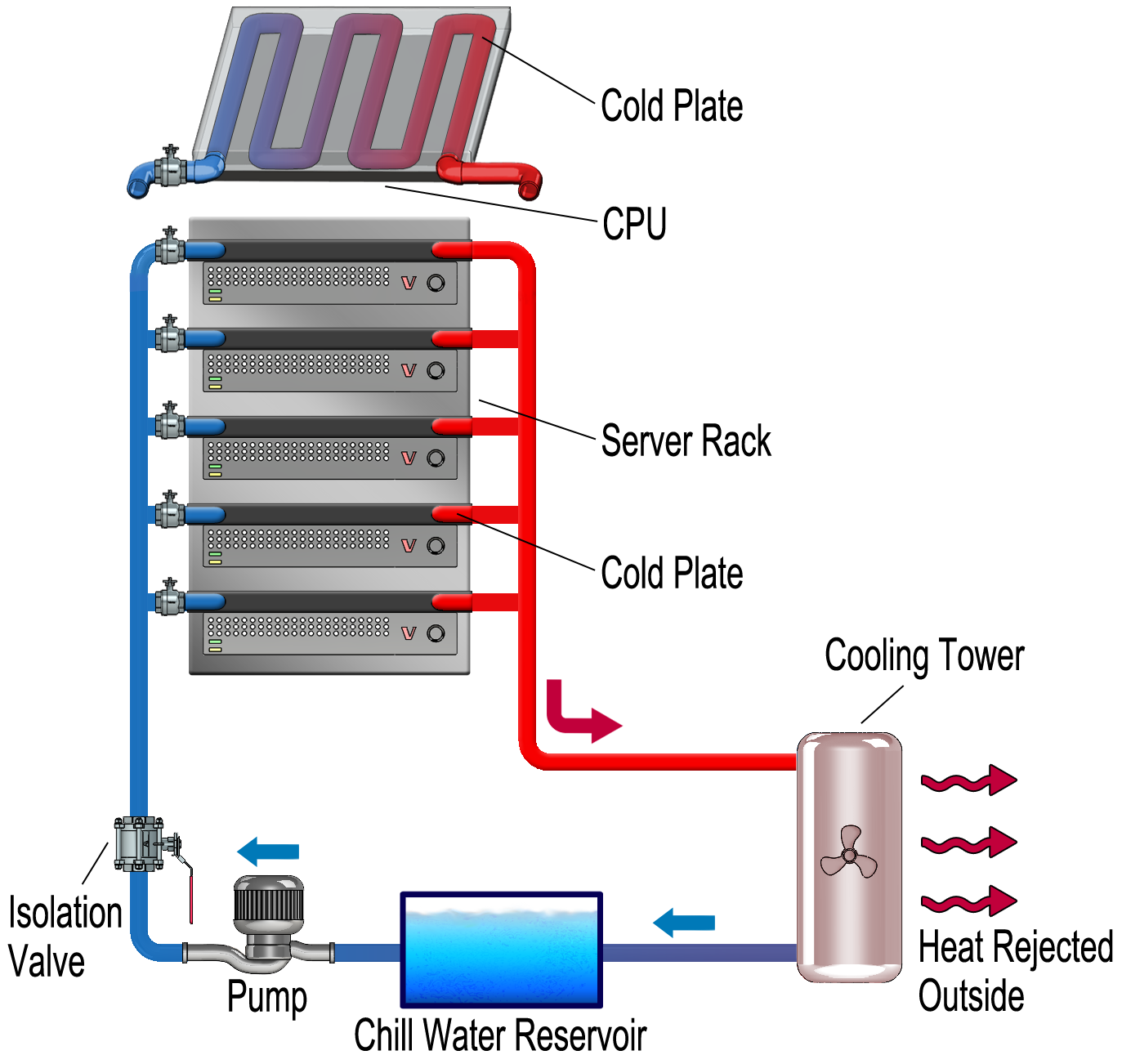

4. Cold Plate

Fig. 4 – Cold Plate Cooling

Cold plate cooling consists of a heat exchanger mounted directly to the chip. Chilled refrigerant passes through internal passages in the cold plate, and heat is conducted from the chip to the cold plate. The return loop is routed back to a chiller where the now-heated refrigerant is cooled through a second loop which then rejects heat to the outdoors (Fig. 4).

Maintenance and repair is greatly simplified with cold plate cooling as individual servers can be isolated. Also, cold plate cooling typically uses simpler water/glycol mixes although some suppliers are starting to offer more exotic dielectric media as well. Currently cold plate cooling is about as effective as single-phase cooling, and this combination of simplicity and effectiveness has led to widespread adoption in AI data centers.

The rest of this article will discuss fluid control challenges with cold plate technology, specifically as it pertains to valves (we are a valve company, after all!).

How is direct-to-chip cold plate cooling controlled?

Refrigerant flow rate and temperature are critical parameters for controlling cold plate cooling. Temperature is controlled centrally by the chiller. Controlling flow rate for each server rack, however, poses a bit of a challenge. Here is where valves come into play.

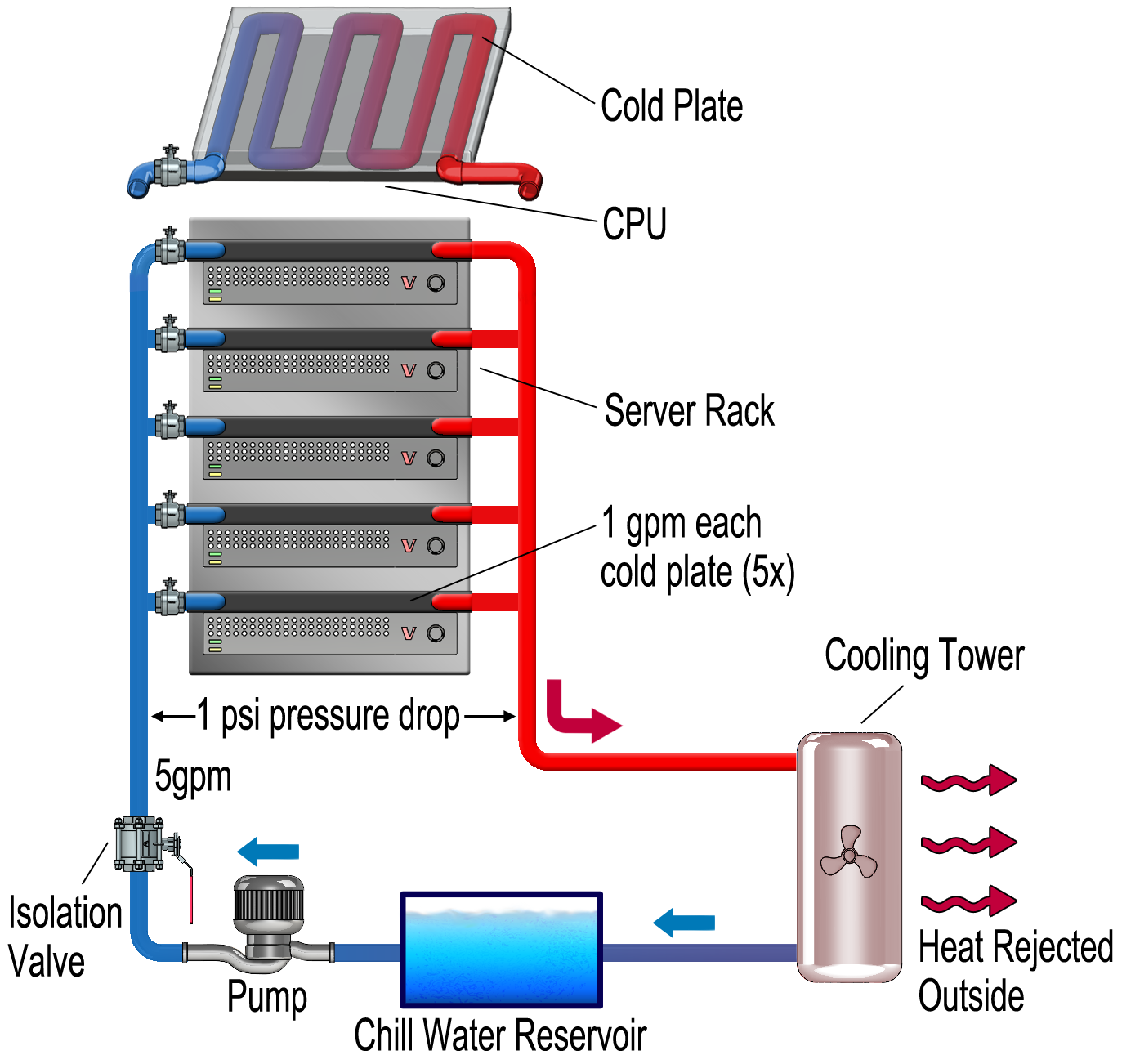

Fig. 5 – Cold Plate Cooling Loop (5 Servers)

Consider the setup in Fig 5, a cold plate design. For illustrative purposes, let's assume each cold plate requires a flow rate of 1 gpm. Thus, in a five server rack the total loop flow rate is 5gpm.

This is all well and good if there are no process changes. But what happens if a server is temporarily removed, say for maintenance or repair? In this case there is still 5gpm total loop flow, but now that flow is divided among four cold plates instead of five, so the flow per cold plate is 5GPM/4 = 1.25GPM per cold plate. This represents a 25% increase which could have undesirable effects on the cold plate such as erosion, resulting in premature performance degradation, leaks or even failure.

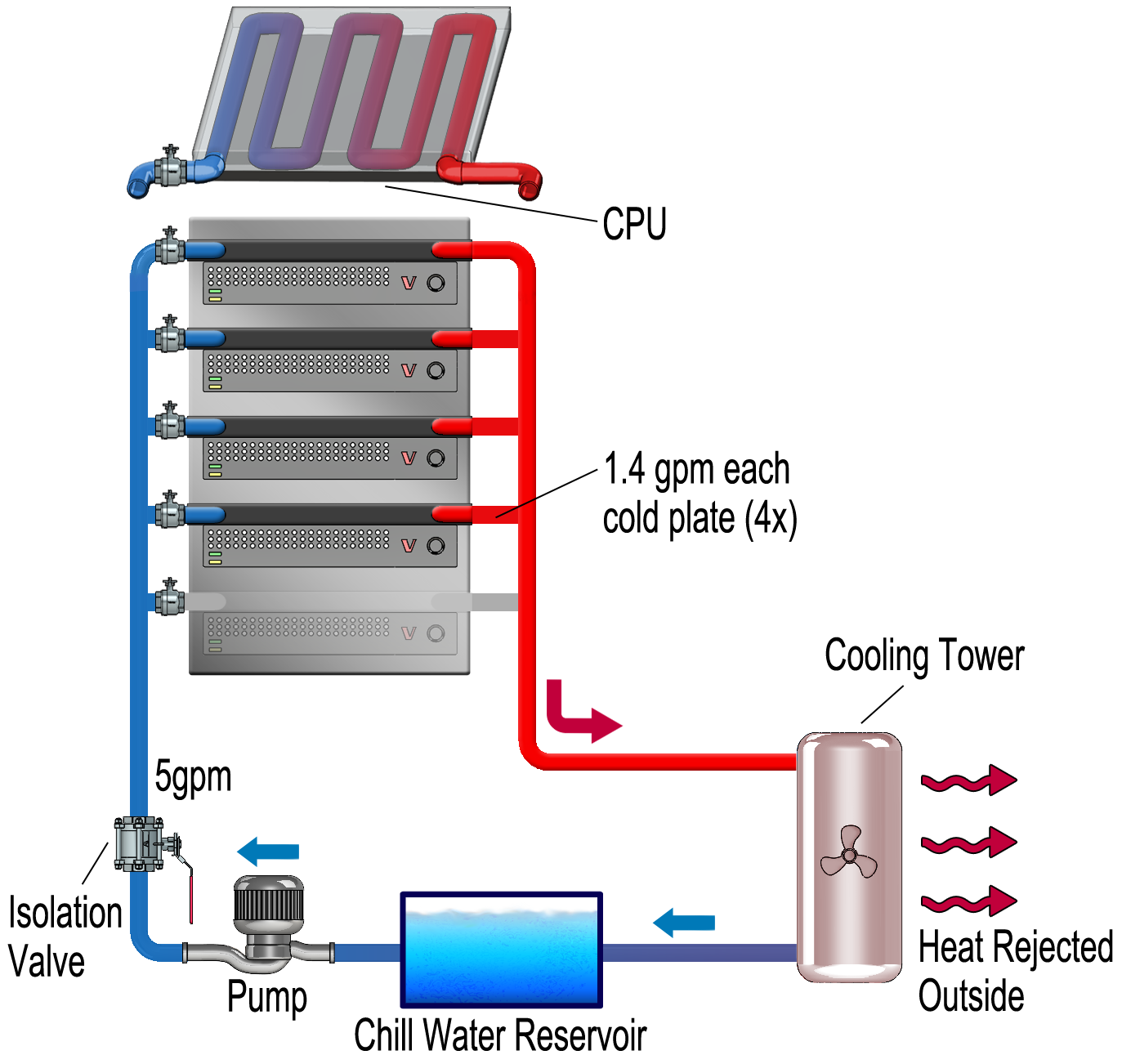

Fig. 6 – Cold Plate Cooling Loop (4 Servers)

(Fig. 6) shows the flow divided among four cold plates instead of five at 1.25GPM per cold plate.

Therefore, some sort of flow control is required. How do we maintain a flow of 1GPM per cold plate when we remove one?

One solution lies with the relationship between flow rate and pressure drop. For a given pipe run, the higher the flow rate the greater the pressure drop. We can use this relationship to control flow by measuring pressure drop.

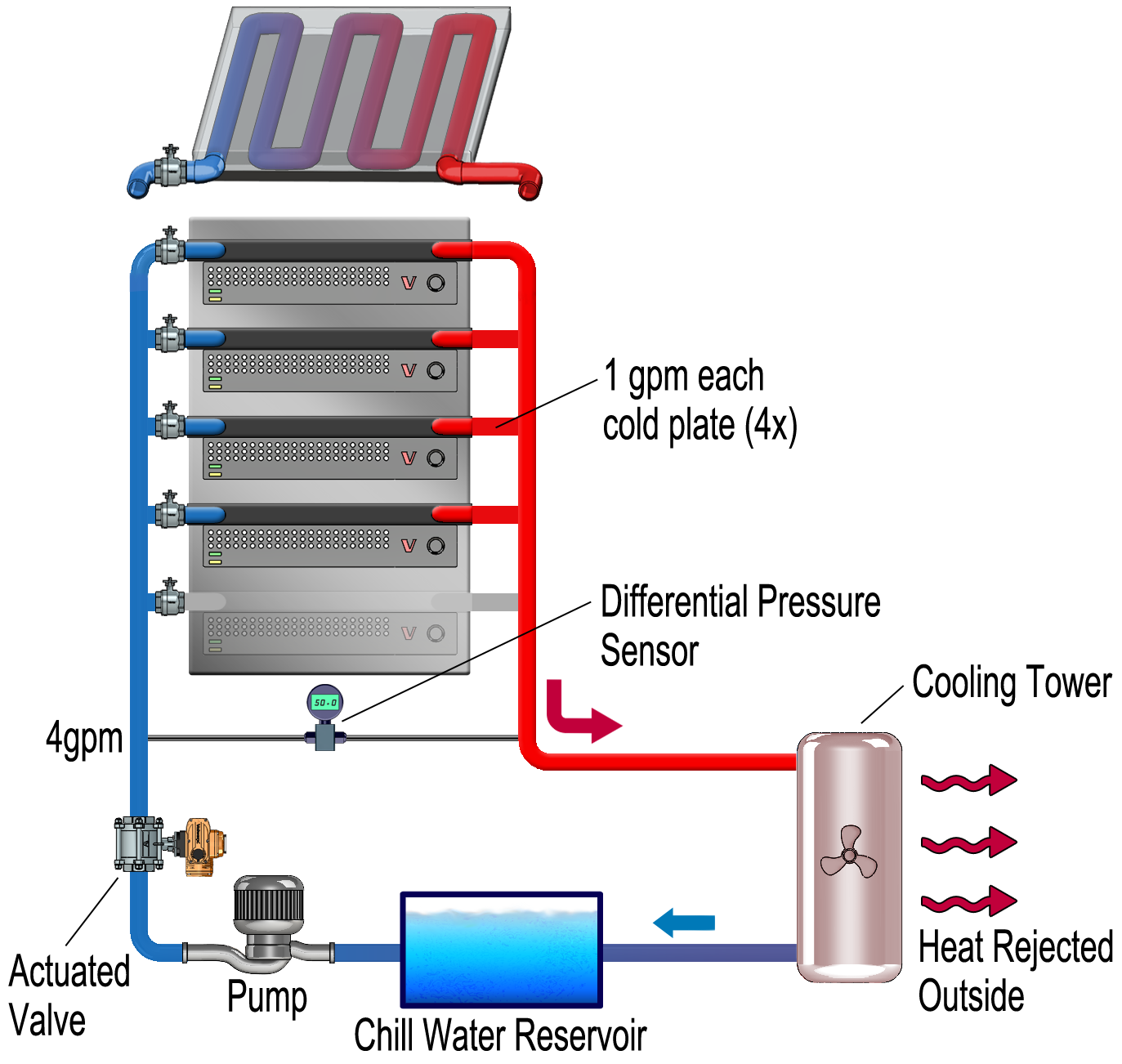

Fig. 7 – Cooling Loop with Differential Pressure Sensor

Fig. 7 shows the same cooling loop but with a differential pressure sensor added to measure the pressure drop across the cold plates. Let's assume that under nominal conditions (i.e. 5 cold plates at 1GPM ea) the pressure drop is 1psi. However, when we remove a cold plate the flow increases so the pressure drop increases as well. For illustrative purposes, let's assume the pressure drop is now 1.4psi.

Here's where an actuated valve comes in. Using the pressure differential for feedback, we can throttle the flow rate down until the pressure drop is back to 1psi, which gives us a new flow rate of 4gpm. The flow rate can be adjusted anywhere from the maximum to zero. So if, for instance, two servers are removed, the valve is throttled further to 3gpm, and the 1gpm per cold plate is maintained, and so on.

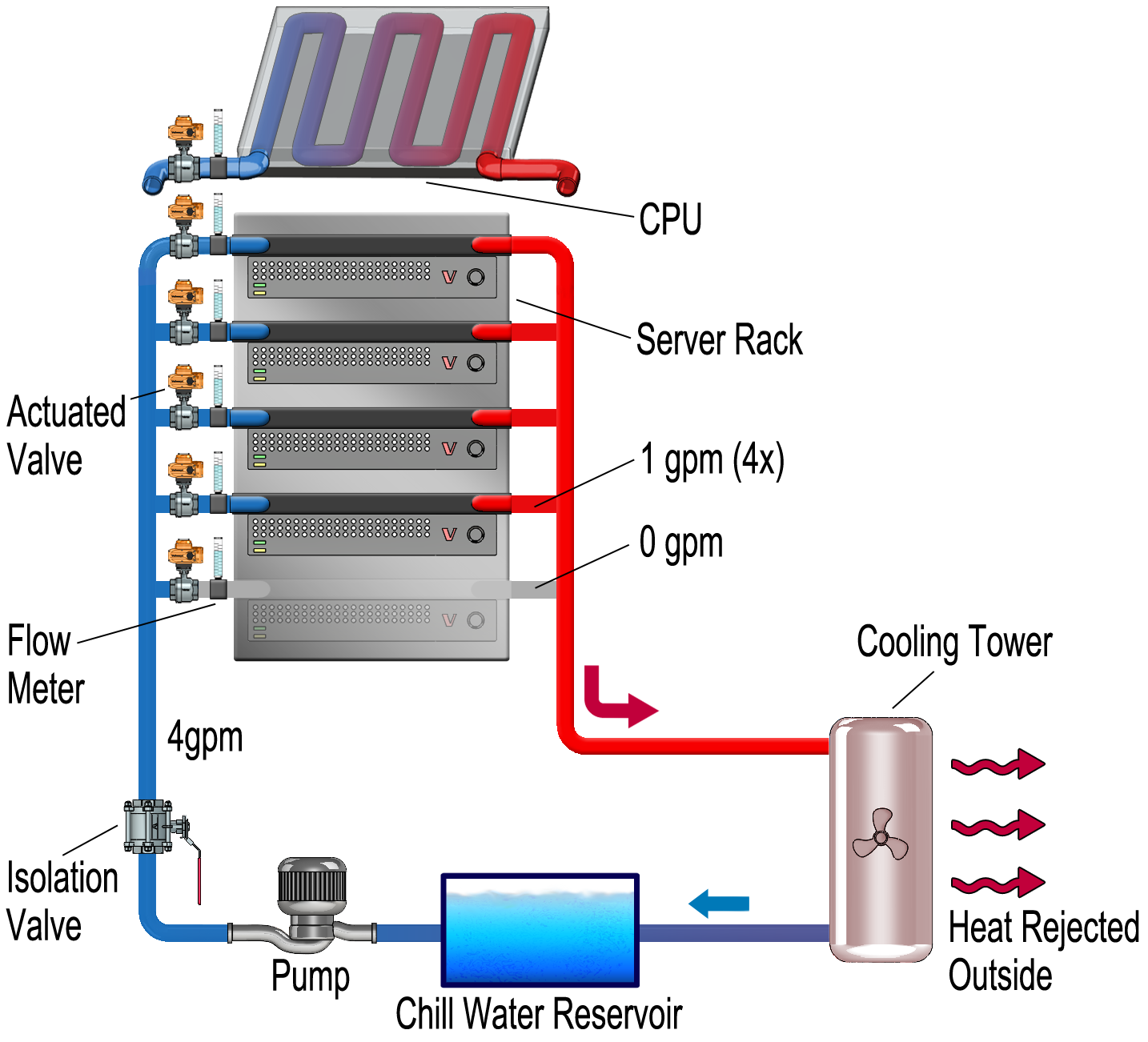

Fig. 8 – Throttled Flow Control with Actuated Valve

One drawback is this requires both a throttling valve as well as isolation valves. Again, referring to Fig 7, each cold plate requires its own isolation valve. But what if a valve can serve both as an isolation valve and a control valve?

This is the setup in Fig. 8. Each cold plate cooling loop has a throttling valve that, when closed, also isolates the loop. In addition, a flow sensor provides feedback for throttling control. With this arrangement we are measuring flow directly for each cold plate, so a finer degree of control and faster response can be achieved.

What valves are used in cold plate cooling?

So valves play an essential role in maintaining proper cooling. But there are a myriad of valve designs and materials. What criteria do we use in valve selection?

The temperature, pressure and material properties of the dielectric fluid are fairly benign: typical operating temperature is ~40°C with a temperature rise of ~10° across the server rack and operating pressure is around ~60psi. For single phase cooling the latest dielectric fluids are made of synthetic hydrocarbons or esters. These have a density of about 80- 90% that of water with ~5- 30 times the viscosity. They are non-conductive and generally non-reactive and have a service life often exceeding 10 years.

These parameters are easily accommodated by a variety of materials including brass, carbon steel and even PVC. However, it is absolutely critical that the flow path remains pristine, i.e. free of particulates or films. This rules out brass and carbon steel as the former can slough off over time and the latter can oxidize. PVC is also not suitable because it can creep when subjected to even mildly elevated temperatures over a long period of time.

Therefore, the material of choice is stainless steel. Further, corrosion resistant 316 stainless steel. Further still, highly polished 316 stainless steel. A high level of polish eliminates micro-canyons that can lead to material erosion and fluid path contamination. Are there valves readily available that meet data center cleanliness requirements?

As it turns out, there are. Stainless steel sanitary valves, used in food, beverage and pharma applications, possess all of these properties. For instance, Valworx 5701 series sanitary valves are made of 316 stainless steel polished to 8-10Ra- far smoother than the standard 32Ra – and feature encapsulated seats to avoid particulate traps.

Coupled with a modulating actuator, these valves provide precise control while meeting the cleanliness and performance requirements for data center cooling applications. Further, when coupled with a flow meter, the actuated valve/flow meter combination comprises its own mini-cooling circuit, thereby providing precise temperature control for each server while simplifying maintenance and minimizing downtime effects.

For more information on how Valworx actuated sanitary valves can meet your data center cooling needs, please visit us at Valworx.com.

This blog post was written entirely by humans.

Sources:

https://stlpartners.com/articles/data-centres/data-center-liquid-cooling/

https://www.datacenterdynamics.com/en/analysis/nvidia-gtc-jensen-huang-data-center-rack-density/

https://jetcool.com/post/how-power-density-is-changing-in-data-centers/

https://infinityturbine.com/pdf/IT-infinity-turbine-nvidia-server-rack-waste-heat-a100-vs-h100-comparison.pdf

https://www.usatoday.com/story/news/nation/2026/02/04/elon-musk-spacex-xai-merge/88488637007/

https://accelsius.com/wp-content/uploads/The-Art-of-Two-Phase-Data-Center-Cooling.pdf

https://www.kaweller.com/selecting-cold-plate-technology/

https://www.belimo.com/us/en_US/blog/a-guide-to-data-center-cooling